RAG vs CAG vs KAG: Choosing the Right Augmentation Strategy for Your AI System

So you’ve built your first RAG system following our guide to RAG fundamentals. It’s working. Your chatbot can answer questions about your documentation, your customer support team is happy, and you’re feeling pretty good about this whole AI thing. Then reality hits.

Your users start complaining about slow response times. You notice the system retrieving the same documents over and over for common questions. And when someone asks a complex question that requires connecting multiple pieces of information? Your RAG system kind of falls apart.

Here’s the truth nobody tells you upfront: RAG isn’t always the answer. Sometimes you need CAG. Sometimes you need KAG. And most of the time, actually you need a combination of all three.

In this post, we’ll break down when to use each approach, why they exist, and how to choose the right strategy for your specific use case. No buzzwords, no hand-waving. Just practical guidance you can use today.

The Problem with “RAG for Everything”

Let’s start with a hard truth: RAG has limitations. If you’ve been running a RAG system in production, you’ve probably noticed one or more of these issues:

- Latency Problems: Every query triggers a vector search, retrieval, and then generation. That’s fine for occasional queries, but what happens when 100 users ask “What are your business hours?” within the same hour? You’re doing the same expensive retrieval operation 100 times.

- Lack of Reasoning: RAG retrieves relevant chunks, but it doesn’t understand relationships between concepts. Ask it “Which products in our catalog are suitable for customers who bought Product X?” and it struggles because it can’t reason about product relationships it can only retrieve individual product descriptions.

- Context Overload: When a query requires information from 20 different documents, RAG tries to stuff all that context into the LLM’s prompt. This leads to longer processing times, higher costs, and often worse answers because the LLM gets overwhelmed.

- No Memory of Patterns: Your system answers the same frequently asked questions thousands of times, re-retrieving and re-generating identical responses. That’s not just inefficient it’s wasteful.

These aren’t failures of RAG. They’re just signs that you need a more sophisticated approach. Enter CAG and KAG.

Understanding the Three Approaches

RAG: Retrieval-Augmented Generation (The Bridge)

Quick Recap: RAG connects your LLM to external data sources by retrieving relevant documents and using them as context for generation. It’s the bridge between static model knowledge and dynamic, up-to-date information.

Best For:

- Dynamic content that changes frequently

- Large document collections where you can’t predict what users will ask

- Scenarios where you need recent information (news, updates, changelogs)

Example Use Case: A documentation chatbot where engineers ask questions about different API endpoints. The docs change weekly, and queries are unpredictable.

CAG: Cache-Augmented Generation (The Memory)

CAG is all about efficiency and speed. Instead of retrieving and generating fresh responses every time, CAG caches frequently accessed results. Think of it as giving your AI system a short-term memory.

How It Works:

- User asks a question

- System checks if an identical or semantically similar question has been asked before

- If yes, return the cached response instantly

- If no, perform retrieval/generation and cache the result

Best For:

- High-traffic applications with repetitive queries

- Scenarios where response time is critical (customer-facing chatbots)

- Use cases with a predictable set of common questions

Example Use Case: An e-commerce chatbot where 70% of queries are variations of “What’s your return policy?” or “How do I track my order?” Cache those answers and serve them in milliseconds instead of seconds.

Key Insight: CAG doesn’t replace RAG it sits in front of it. You still need RAG for the long tail of unique queries. CAG just makes your most common queries blazingly fast.

KAG: Knowledge-Augmented Generation (The Reasoner)

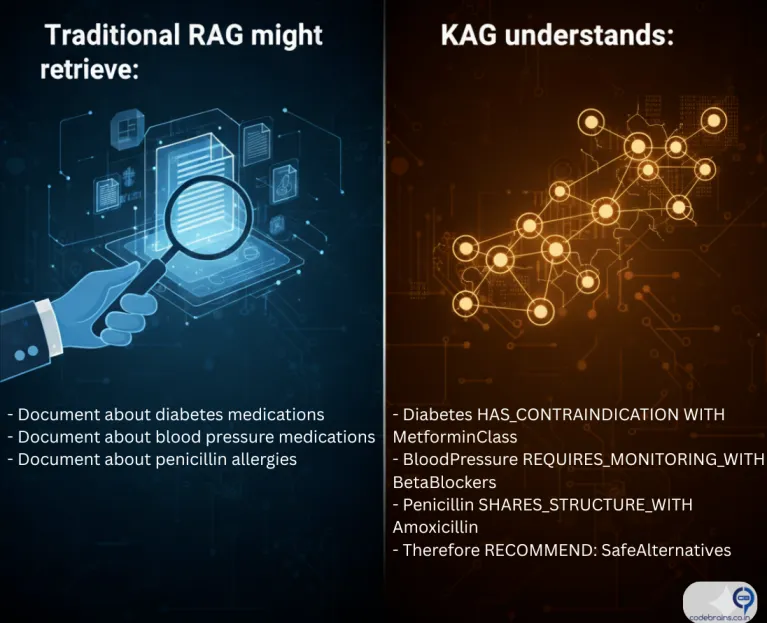

KAG takes a fundamentally different approach. Instead of retrieving raw documents, it works with structured knowledge think of knowledge graphs, entity relationships. KAG excels when you need reasoning, not just retrieval.

How It Works:

- Information is stored as a knowledge graph (entities + relationships)

- When a query comes in, the system traverses the graph to find connected information

- The LLM uses this structured, relationship-rich context to generate responses

Best For:

- Complex queries requiring multi-hop reasoning

- Domain-specific applications (medical diagnosis, legal analysis, financial recommendations)

- Scenarios where understanding relationships is critical

Example Use Case:

A healthcare assistant that needs to answer “What medications are safe for a patient with diabetes and high blood pressure who is allergic to penicillin?” This requires reasoning about:

- Drug interactions

- Condition compatibility

- Allergy cross-reactions

A knowledge graph can encode these relationships explicitly, allowing the system to traverse from conditions → contraindications → safe alternatives.

Key Insight: KAG is about depth and reasoning. It’s overkill for simple question-answering but essential when your domain has complex interconnected rules.